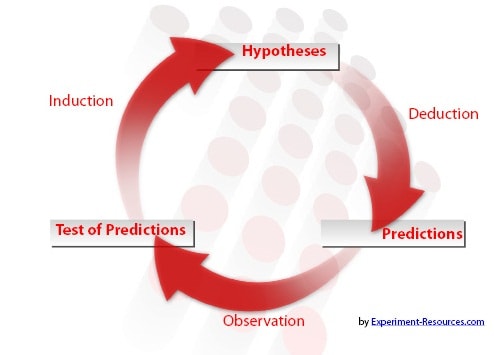

Once you have generated a hypothesis, the process of hypothesis testing becomes important.

Your hypothesis statement took the form of a prediction or speculation. Once the experiment has been carried out, you can now assess whether this prediction was correct or not.

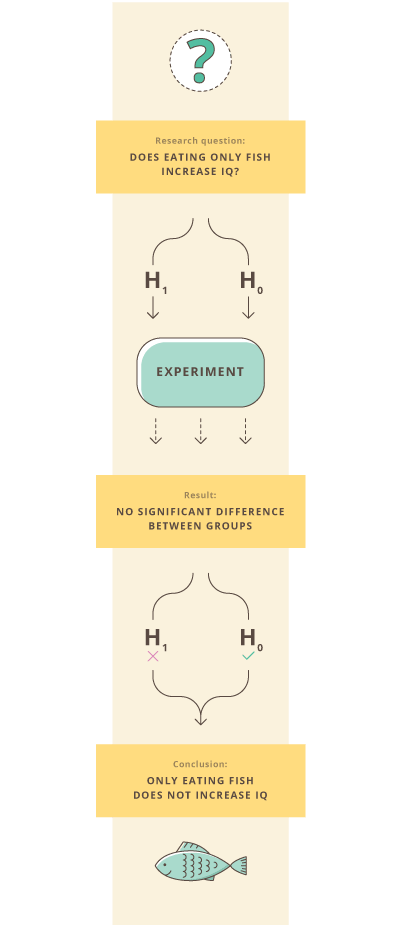

You should therefore have two hypotheses, the alternative [4] and the null [5].

H1 The alternative hypothesis: This is the research hypothesis. It is the scientist’s speculation/prediction at the heart of the experiment.

H0 The null hypothesis: The is a statement that there is NO significant difference in groups, or more generally, that there is no association between two groups. In other words, it is describing an outcome that is the opposite of the research hypothesis. The original speculation is not supported.

Conventionally, it is good practice to assume that the null hypothesis is correct until proven otherwise.

This means researchers don’t seek out to accept their research hypothesis, but rather to accept or reject the null hypothesis first, which then allows them to accept or reject the alternative hypothesis. Though most research is conducted with an expectation of how the results will turn out, good practice is to make ample room for the possibility that your hypothesis is wrong.

For testing, you will be analyzing and comparing your results [6] against the null hypothesis, so your research must be designed with this in mind. It is vitally important that the research you design [7] produces results that will be analyzable using statistical tests [8].

Most people are very afraid of statistics [9], and worry about not understanding the processes or messing up the experiments [10]. There really is no need to fear.

Most scientists understand only the basic principles of statistics, and once you have these, modern computing technology gives a whole battery of software for hypothesis testing [11].

Designing your research only needs a basic understanding of the best practices for selecting samples, isolating testable [12] variables and randomizing groups.

A common statistical method is to compare the means of various groups.

For example, you might have come up with a measurable [13] hypothesis that children will gain a higher IQ if they eat oily fish for a period of time.

Your alternative hypothesis [4], H1 would be

“Children who eat oily fish for six months show an increase in IQ when compared to children who have not.”

Therefore, your null hypothesis [5], H0 would be

“Children who eat oily fish for six months do NOT show an increase in IQ when compared to children who have not.”

In other words, with the experiment design [14], you will be measuring whether the IQ increase of children fed oily fish will deviate from the mean, assumed to be the normal condition.

From IQ testing of the control group [15], you find that the control group has a mean IQ of 100 before the experiment and 100 afterwards, or no increase. This is the mean against which the sample group will be tested.

The children fed fish show an increase from 100 to 106.

So, does this mean that we can reject the null hypothesis and conclude that our alternative hypothesis was in fact true?

While there does appear to be an increase, but here is where statistics [9] enters the hypothesis testing process [1]. You need to test whether the increase is significant [16], or if experimental error [17] and standard deviation [18] could account for the difference.

On the surface, a result may appear to human intuition to be meaningful. But statistical testing can show us whether the result we see is statistically meaningful and big enough to draw any conclusions.

Using an appropriate test, the researcher compares the two means, taking into account the increase, the number of data samples and the relative randomization of the groups. The researchers may use a statistical test called a t-test, and compare the p-value they find on a table. This result [6] shows whether the researcher can have confidence [19] in the results.

If the p-value is above a certain value, the researchers can be assured that the difference is not just due to chance or error. This allows for the rejection of the null hypothesis. In our example, the 6-point difference does not in fact prove to be significant.

Thus, many experiments provide results that go against our expectations. While casual observation may show a difference or relationship between two groups, it may not be a statistically significant difference.

A well-worded hypothesis will mean that if we reject H0, we must logically accept H1. In other words, there is either a change or there is no change, there is no other possibility.

Remember, not rejecting the null hypothesis is not the same as accepting it. It is only that this particular experiment showed that oily fish had no affect upon IQ. This principle lies at the very heart of hypothesis testing.

The exact type of statistical test [16] used depends upon many things, including the field, the type of data and sample size [20], among other things.

The vast majority of scientific research is ultimately tested [16] by statistical methods, all giving a degree of confidence in the results.

For most disciplines, the researcher looks for a significance level of 0.05, signifying that there is only a 5% probability that the observed results and trends occurred by chance.

For some scientific disciplines, the required level is 0.01, only a 1% probability that the observed patterns occurred due to chance or error. Whatever the level, the significance level determines whether the null [5] or alternative [4] is rejected, a crucial part of hypothesis testing.

Links

[1] https://explorable.com/hypothesis-testing

[2] https://explorable.com/users/martyn

[3] https://explorable.com/users/Lyndsay%20T%20Wilson

[4] https://explorable.com/research-hypothesis

[5] https://explorable.com/null-hypothesis

[6] https://explorable.com/statistically-significant-results

[7] https://explorable.com/research-designs

[8] https://explorable.com/statistical-hypothesis-testing

[9] https://explorable.com/statistics-tutorial

[10] https://explorable.com/conducting-an-experiment

[11] http://en.wikipedia.org/wiki/Statistical_hypothesis_testing

[12] https://explorable.com/testability

[13] https://explorable.com/scientific-measurements

[14] https://explorable.com/experimental-research

[15] https://explorable.com/scientific-control-group

[16] https://explorable.com/significance-test

[17] https://explorable.com/type-I-error

[18] https://explorable.com/measurement-of-uncertainty-standard-deviation

[19] https://explorable.com/statistics-confidence-interval

[20] https://explorable.com/statistical-significance-sample-size